| 123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566676869707172737475767778798081828384858687888990919293949596979899100101102103104105106107108109110111112113114115116117118119120121122123124125126127128129130131132133134135136137138139140141142143144145146147148149150151152153154155156157158159160161162163164165166167168169170171172173174175176177178179180181182183184185186187188189190191192193194195196197198199200201202203204205206207208209210211212213214215216217218219220221222223224225226227228229230231232233234235236237238239240241242243244245246247248249250251252253254255 |

- {

- "fetched_at": "2025-12-12T13:07:57.816779Z",

- "total_sources": 16,

- "successful": 19,

- "failed": 0,

- "results": [

- {

- "name": "**Very Important Agents** \\\\\n\\\\\nMy recent Changelog and Friends podcast appearance and the Claude Code plugins that help me get real work done with AI.",

- "url": "https://nicknisi.com/posts/very-important-agents",

- "type": "article",

- "status": "success",

- "content": "Very Important Agents \\| Nick Nisi\n\nI recently appeared on [Changelog and Friends](https://changelog.com/friends/120) to talk about agents, the Bun acquisition by Anthropic, and how I\u2019ve been using Claude Code in my daily workflow. The conversation kept circling back to a question I find interesting: how do we use AI to get real work done without producing generic _slop_?\n\nI\u2019ve been building toward an answer - two custom Claude Code plugins called [Essentials](https://github.com/nicknisi/claude-plugins/tree/main/plugins/essentials) and [Ideation](https://github.com/nicknisi/claude-plugins/tree/main/plugins/ideation) that help me stay productive while keeping my code and ideas authentically mine. Rather than let that podcast conversation disappear into the archives, I wanted to share what I\u2019ve learned.\n\n## Keeping Code Clean with [Essentials](https://github.com/nicknisi/claude-plugins/tree/main/plugins/essentials)\n\nThe Essentials plugin is my first line of defense against code complexity. It has two components I reach for constantly.\n\n**[Code Simplifier](https://github.com/nicknisi/claude-plugins/blob/main/plugins/essentials/agents/code-simplifier.md)** is an agent that refactors code to improve readability without changing functionality. I recently used it on a nested callback situation that had grown organically over several iterations. You know the type - it started simple, then requirements changed, then edge cases appeared, and suddenly you\u2019re staring at something that works but makes your brain hurt. The agent untangled it into something I could actually follow. Same behavior, less cognitive load.\n\n**[de-slopify](https://github.com/nicknisi/claude-plugins/blob/main/plugins/essentials/commands/de-slopify.md)** is a command that removes AI-generated patterns from code. After running Claude on a feature, I sometimes notice overly verbose comments, unnecessary abstractions, or redundant explanations that scream \u201can AI wrote this.\u201d de-slopify catches these patterns and strips them out. The result is code that looks like a human wrote it - because the important parts are still mine, just without the AI fingerprints.\n\nThis might seem contradictory. Using AI to remove evidence of AI? But that\u2019s exactly the point. I want AI assistance with the mechanics, not AI aesthetics polluting my codebase.\n\n## From Brain Dump to Structure with [Ideation](https://github.com/nicknisi/claude-plugins/tree/main/plugins/ideation)\n\nHere\u2019s where things get meta. The [Ideation skill](https://github.com/nicknisi/claude-plugins/blob/main/plugins/ideation/skills/ideation/SKILL.md) takes messy stream-of-consciousness thoughts and transforms them into structured artifacts.\n\nI\u2019m literally using this plugin right now to write this post.\n\nThat\u2019s not a hypothetical. It\u2019s the truth.\n\nMy process started with a voice dictation where I rambled about the podcast, what I wanted to cover, and why authenticity matters when using AI for content. Just scattered thoughts, no structure. The Ideation plugin took that brain dump and produced a contract (what I\u2019m committing to), a PRD (what the post needs to accomplish), and a spec (how to actually write it).\n\nThe value here isn\u2019t that AI wrote my outline. It\u2019s that AI removed the blank page problem. I went from \u201cI should write about this podcast\u201d to having a clear structure to follow in minutes instead of days. The ideas are still mine. The organization just got easier.\n\n## AI as Amplifier, Not Replacement\n\nThese plugins represent a philosophy I keep coming back to: AI should amplify your capabilities, not replace your judgment.\n\nThere are two problems I\u2019m trying to solve. First, the barrier problem. I have ideas and things I want to build. But life is busy, and the gap between \u201cI should do this\u201d and actually having a plan is often too wide to cross. AI can lower that barrier by handling the organizational grunt work.\n\nSecond, the slop problem. AI-generated code often has a distinctive smell - overly verbose, over-abstracted, over-commented. I don\u2019t want that in my codebase. I want to use AI in a way that makes me more productive without making my code less mine.\n\nThe plugins I\u2019ve built are my answer to both problems. Essentials keeps my code clean. Ideation turns chaos into structure. Each one is intentionally designed to amplify rather than replace.\n\nThe key is being intentional. Use AI for the right things. Use it in ways that lower barriers without raising slop levels. Use it as an amplifier, not a replacement.\n\n## Try It Yourself\n\nAll of these plugins live in my [Claude plugins marketplace](https://github.com/nicknisi/claude-plugins). You can install the whole collection with a single command:\n\n```\n/plugin marketplace add nicknisi/claude-plugins\n```\n\nThat gives you access to Essentials, Ideation, and a few other plugins I\u2019ve been building. Browse the repo if you want to see how they work under the hood - or just install and experiment.\n\n**Resources:**\n\n- [Claude plugins marketplace](https://github.com/nicknisi/claude-plugins) \\- The full collection\n- [Claude Code Documentation](https://docs.anthropic.com/en/docs/claude-code) \\- Getting started with Claude Code\n- [Changelog and Friends Episode 120](https://changelog.com/friends/120) \\- The podcast conversation that started this\n\nLet me know how it works for you. I\u2019m [@nicknisi.com](https://bsky.app/profile/nicknisi.com) on Bluesky.\n\n## 4 likes\n\n[](https://bsky.app/profile/tommis.fi)[](https://bsky.app/profile/programmerman.net)[](https://bsky.app/profile/drk.wtf)[](https://bsky.app/profile/megalithic.io)\n\n### Comments\n\n5 comments from [Bluesky](https://bsky.app/profile/nicknisi.com/post/3m7i3ygaets2v), sorted by newest first.\n\n[\\\\\nKilian Valkhof @kilianvalkhof.com](https://bsky.app/profile/did:plc:z6uec3g7xgkvxpy442663waq) [\"all the browser suck now\"\\\\\n\\\\\now, straight to the heart.](https://bsky.app/profile/did:plc:z6uec3g7xgkvxpy442663waq/post/3m7ibujxh6k2l)\n\n[\\\\\nNick Nisi @nicknisi.com](https://bsky.app/profile/did:plc:qcyz4wcmgnz4mzxevrsrf6j6) [Polypane is a notable exception to this! Still love using this as my debugging/development browser!](https://bsky.app/profile/did:plc:qcyz4wcmgnz4mzxevrsrf6j6/post/3m7imtvnszs2t)\n\n[\\\\\nKilian Valkhof @kilianvalkhof.com](https://bsky.app/profile/did:plc:z6uec3g7xgkvxpy442663waq) [phew!](https://bsky.app/profile/did:plc:z6uec3g7xgkvxpy442663waq/post/3m7rzllo2zs2t)\n\n[\\\\\nVLDMR VRNKN @vladimir.varank.in](https://bsky.app/profile/did:plc:wzfphsrpkjyf3nlg3befg4l5) [How do you review/iterate on what an agent did, when the result isn't just right (and you know exactly how the right result looks like)? Ie. do you find it simpler to continue talking to the agent? Or do you jump in and do the last mile of work yourself, and ask to refresh the agent's context after?](https://bsky.app/profile/did:plc:wzfphsrpkjyf3nlg3befg4l5/post/3m7ny2jpye22y)\n\n[\\\\\nNick Nisi @nicknisi.com](https://bsky.app/profile/did:plc:qcyz4wcmgnz4mzxevrsrf6j6) [It varies by situation but for the most part I instruct the agent to fix. My reasoning is that I want that conversation and give it explicit instructions to push back on anything.](https://bsky.app/profile/did:plc:qcyz4wcmgnz4mzxevrsrf6j6/post/3m7qslwjeh22t)\n\n[Speaking at Conferences](https://nicknisi.com/posts/speaking-at-conferences)\n\n\n\n## Nick Nisi\n\nA passionate TypeScript enthusiast, podcast host, and dedicated community builder.\n\nFollow me on\n[Bluesky](https://bsky.app/profile/nicknisi.com).",

- "title": null,

- "description": "My recent Changelog and Friends podcast appearance and the Claude Code plugins that help me get real work done with AI.",

- "fetched_at": "2025-12-12T13:07:52.753737Z",

- "source_name": "Chris Dzombak",

- "source_url": "https://www.dzombak.com/blog",

- "relevant_keyword": "claude"

- },

- {

- "name": "Databricks' Strategic Playbook: Reynold Xin on Growth, AI, and the Future of Data Infrastructure",

- "url": "https://leehanchung.github.io/blogs/2025/11/06/raynold-xin-databricks/",

- "type": "article",

- "status": "success",

- "content": "_Reynold Xin, Apache Spark\u2019s #1 committer famous for \u201cdeleting more code than others wrote,\u201d reveals how Databricks maintains 60% YoY growth while competitors struggle. In a candid interview at Hysta Rising, he shares the contrarian strategies, technical decisions, and AI-first approach shaping the future of data infrastructure._\n\n\n\n## The Growth Story: Databricks vs. Snowflake\n\nDatabricks has maintained impressive growth metrics, growing 60% year-over-year recently and over 50% YoY currently. Internal growth rates are even higher, though undisclosed. This stands in stark contrast to Snowflake\u2019s current 20-30% YoY growth at similar revenue levels.\n\nXin provided crucial context: just 2-3 years ago, Snowflake was growing 100% YoY and was considered the fastest-growing public company in history for enterprise go-to-market. However, their decline illustrates a critical strategic lesson.\n\n### The GTM Investment Trap\n\nWhen Wall Street shifted focus from growth to profitability post-ZIRP era, many companies responded by pausing go-to-market (GTM) hiring. This creates a dangerous illusion: immediate profitability improvement masks a growth time bomb.\n\nWhy? Account executives and solution architects typically take 1-2 years to become productive. \u201cPausing does not have any impact on growth for the next year or two. So momentum will continue for a year and then collapse,\u201d Xin explained.\n\nDatabricks took the contrarian approach\u2014doubling down on GTM investments while competitors pulled back. This strategic patience is now paying dividends as competitors\u2019 growth rates plummet.\n\n## The AI Acceleration\n\nMost enterprises remain primitive in AI/ML/data science adoption, which traditionally generated much smaller revenue than data warehousing. However, 2023 marked a turning point, with growth rates accelerating partly due to generative AI adoption. Databricks now generates over $1 billion ARR from AI products alone.\n\n## M&A Strategy: Acquiring DNA, Not Revenue\n\nDatabricks\u2019 acquisition strategy differs fundamentally from traditional enterprise approaches:\n\n- **Focus on DNA over revenue**: \u201cThe thesis is never about getting revenue, but getting DNA. Revenue is validation.\u201d\n- **Target founders with startup DNA**: Seek founders who\u2019ve gone through the \u201c5-10 year grind\u201d with hands-on customer experience\n- **Empower acquired teams**: Give them resources to drive new product growth\n- **Contrast with traditional M&A**: Unlike Salesforce or Cisco, which primarily acquire for revenue\n\n## The OpenAI Partnership\n\nOpenAI is a significant Databricks customer, and the partnership includes:\n\n- Access to specific models with guaranteed capacity\n- $100M capacity deal for on-demand usage\n- Strategic decision to focus on high-margin software rather than competing in model training\n- Recognition that model serving has \u201chorrible margins\u201d compared to software\u2019s 80-90% margins\n\n## Pivotal Moments in Databricks\u2019 Evolution\n\n### 2015: The PLG Pivot\n\nStarted and ended the year with $1M ARR after attempting product-led growth (PLG). The key learning: GTM motion must match the product. Databricks requires VPC peering and production database connections\u2014sensitive operations. This means potential customers can\u2019t simply swipe on a credit card to obtain the service.\n\n### 2017: Microsoft Azure Partnership\n\nThis partnership became a growth catalyst, with Microsoft and Databricks both selling Azure Databricks. At one point, half of growth came from this channel, allowing more efficient sales team scaling.\n\n### 2020: Multi-Product Expansion\n\nTransitioning from single to multiple products marked a fundamental shift. As Xin noted, \u201cMost companies in Silicon Valley never accomplished second product success.\u201d This multi-year journey included rapid adaptations for generative AI.\n\n## Leadership Evolution: From Coder to Executive\n\nXin\u2019s personal journey reflects a common founder transition:\n\n- First 7 years: \u201cWriting lots of code and building\u201d\n- Became a manager reluctantly when \u201cno one wanted to manage that company\u201d\n- Built the data warehousing business and took over engineering\n- Transitioned from a \u201chands-on IC to a useless manager over the past 5 years\u201d\n\nKey leadership lessons:\n\n- **Delegation mistakes**: \u201cDelegated too much was one major mistake\u201d\n- **Imposter syndrome**: Initially deferring too much to hired executives\n- **Context matters**: Realizing that external hires often lack crucial context\n- **Founder therapy groups**: The value of peer support when hiring executives\n\n## The Future: AI-Native Databases\n\nXin sees a massive disruption coming to the $100B OLTP market still dominated by Oracle. The key insight: AI won\u2019t just optimize existing databases\u2014it will fundamentally reimagine how we build and operate data systems.\n\n\u201cFuture databases will be provisioned and maintained primarily by AI,\u201d Xin predicts. This isn\u2019t incremental improvement but architectural revolution:\n\n- **Self-optimizing schemas**: AI dynamically adjusting data models based on query patterns\n- **Autonomous provisioning**: Infrastructure that scales predictively, not reactively\n- **Intelligent indexing**: AI determining optimal indexes in real-time\n- **Cost collapse**: Building and maintaining custom applications becomes 10-100x cheaper\n\nHis provocative prediction challenges the entire enterprise software model: \u201cNow there\u2019s no reason for people to buy Workday when you can build bespoke solutions based on company workloads.\u201d When AI can generate and maintain custom applications at marginal cost, why pay for generic SaaS?\n\n## Industry Consolidation\n\nThe data infrastructure world is consolidating to five major players:\n\n- Three cloud service providers (each with their own offerings)\n- Databricks\n- Snowflake\n\n\u201cNone of them will go away. Smaller players will become irrelevant,\u201d Xin predicts, pointing to the Fivetran-dbt merger as evidence of this trend.\n\n* * *\n\n## Key Takeaways for AI Engineers\n\nThe Databricks story offers crucial lessons for technical leaders navigating the AI transformation:\n\n1. **Margin discipline matters**: Xin\u2019s rejection of low-margin model serving in favor of 80-90% margin software shows the importance of business model clarity, even in AI hype cycles.\n\n2. **Context beats credentials**: Founders who\u2019ve \u201cdone the grind\u201d often outperform prestigious hires lacking domain context \u2014 a lesson for both hiring and career planning.\n\n3. **Timing contrarian bets**: While competitors optimize for quarterly earnings, Databricks\u2019 multi-year GTM investment demonstrates how patient capital wins in enterprise markets.\n\n4. **AI changes everything**: The shift from human-managed to AI-managed infrastructure is a complete reimagining of the $100B+ database market.\n\n\n_As Xin\u2019s journey from \u201cwriting lots of code\u201d to \u201cuseless manager\u201d shows, the path to transforming industries requires both technical depth and strategic courage. In the AI era, those who understand both code and markets will shape the future of enterprise software._\n\n* * *\n\n```\n@article{\n leehanchung_databricks_reynold_xin,\n author = {Lee, Hanchung},\n title = {Databricks' Strategic Playbook: Reynold Xin on Growth, AI, and the Future of Data Infrastructure},\n year = {2025},\n month = {11},\n day = {06},\n howpublished = {\\url{https://leehanchung.github.io}},\n url = {https://leehanchung.github.io/blogs/2025/11/06/raynold-xin-databricks/}\n}\n```\n\nutterances",

- "title": "Databricks' Strategic Playbook: Reynold Xin on Growth, AI, and the Future of Data Infrastructure",

- "description": "Reynold Xin, Apache Spark's top contributor and Databricks executive, shares candid insights on Databricks' 60% YoY growth strategy, contrarian GTM investmen..., A lightweight commenting system using GitHub issues.",

- "fetched_at": "2025-12-12T13:07:52.767077Z",

- "source_name": "Lee Han Chung",

- "source_url": "https://leehanchung.github.io",

- "relevant_keyword": "cli"

- },

- {

- "name": "**Why Everyone Should Try Claude Skills** \\\\\n\\\\\nClaude Skills are the approachable AI tool I didn't know I needed.",

- "url": "https://nicknisi.com/posts/claude-skills",

- "type": "article",

- "status": "success",

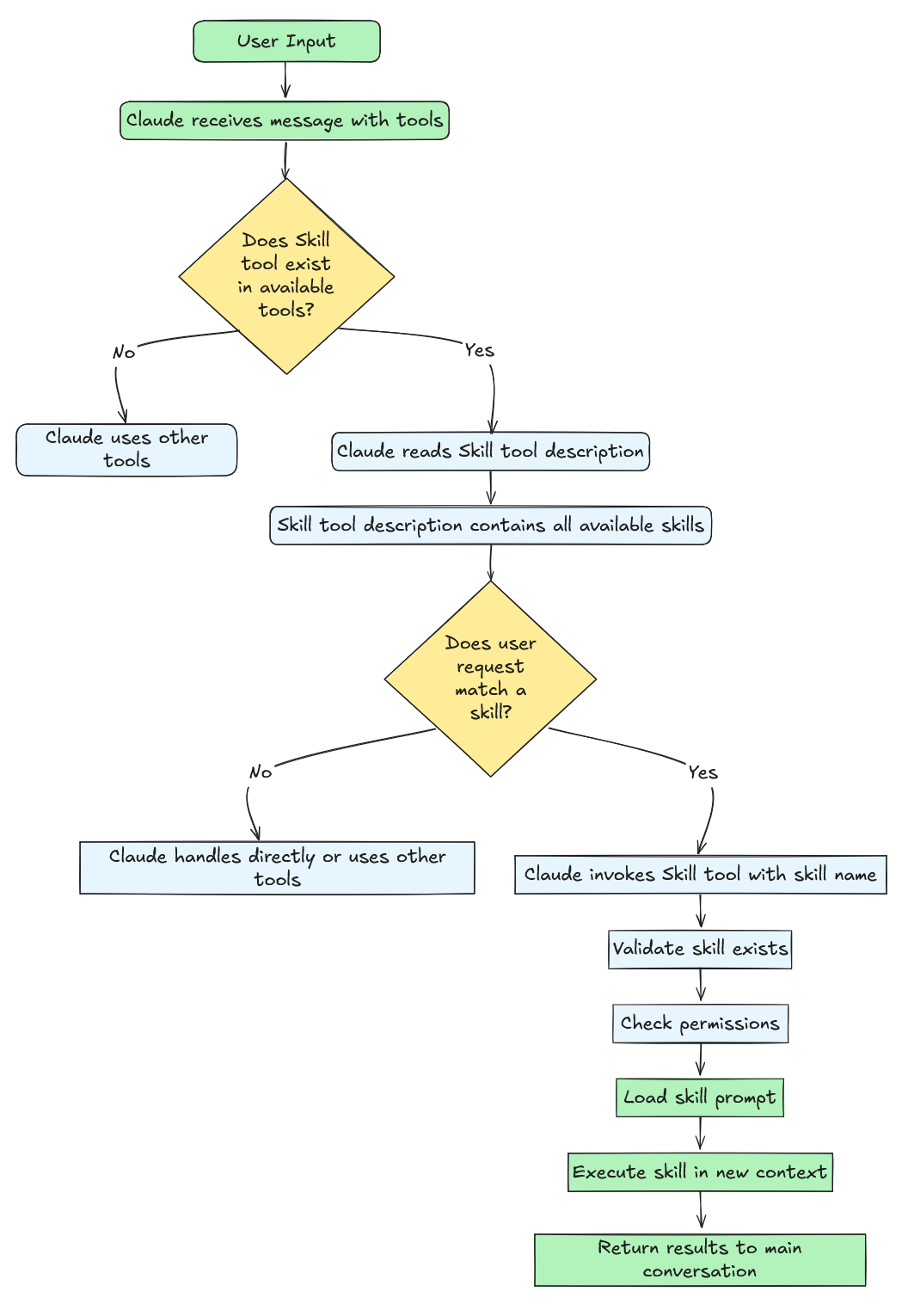

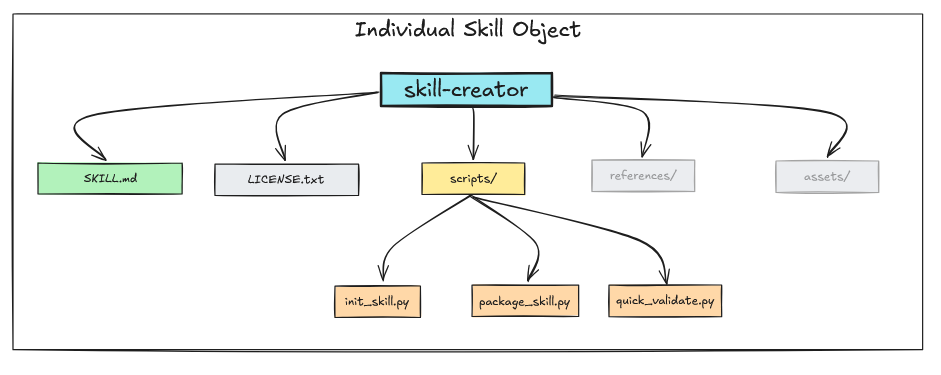

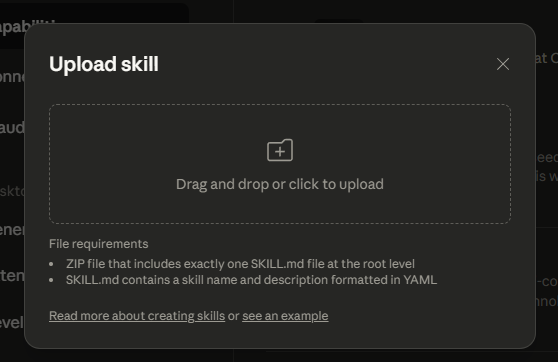

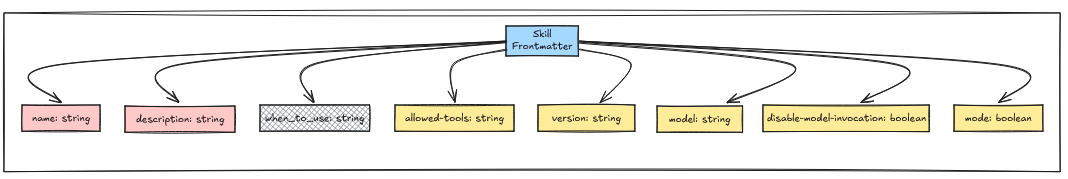

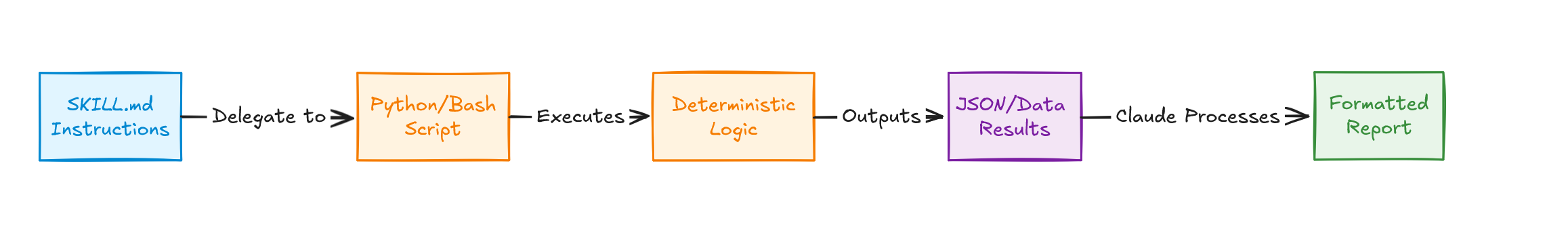

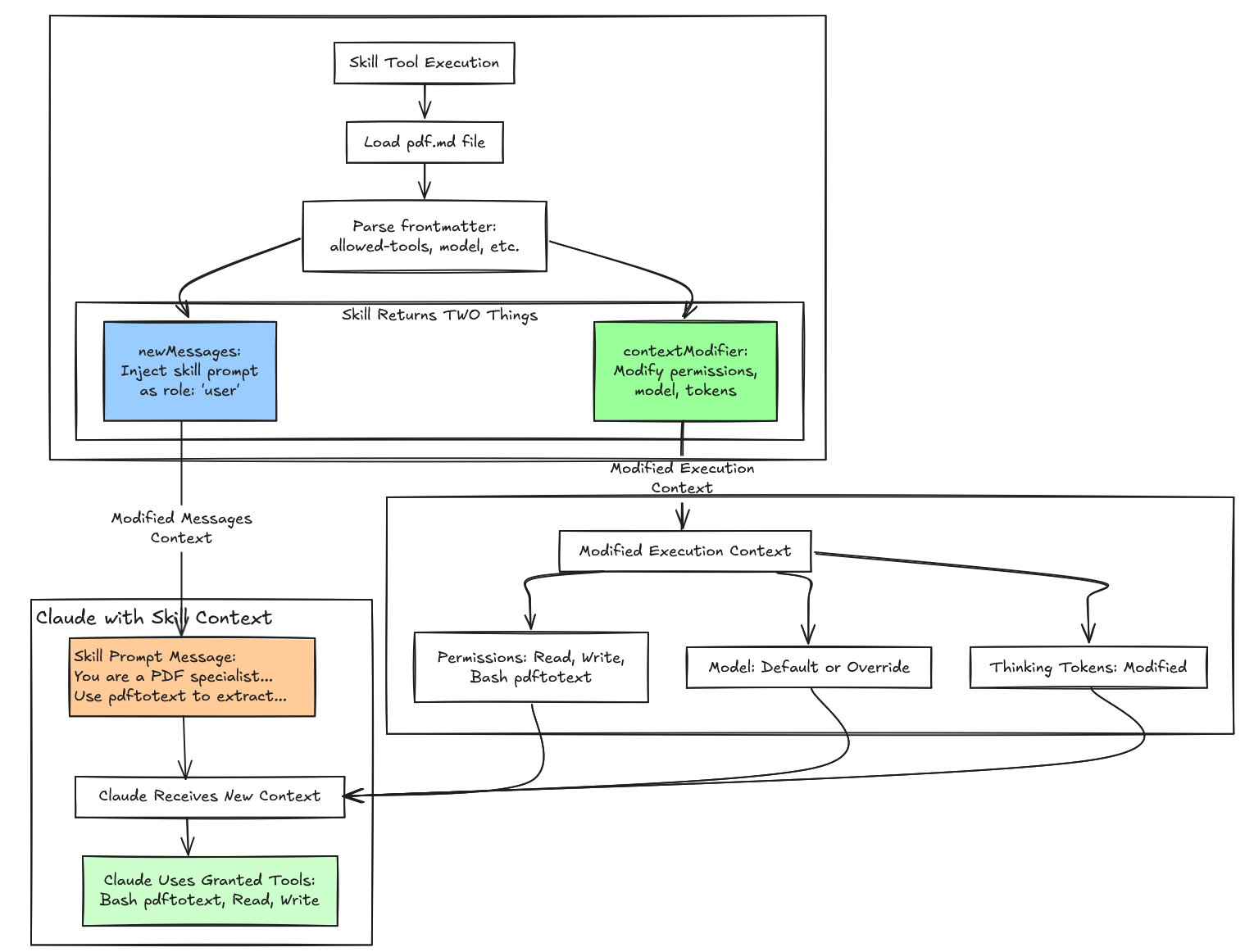

- "content": "Why Everyone Should Try Claude Skills \\| Nick Nisi\n\n# Why Everyone Should Try Claude Skills\n\nAnthropic just released [Claude Skills](https://www.anthropic.com/news/skills), and I\u2019m trying not to get too excited. But I am.\n\nSimon Willison wrote that [this could be bigger than MCP](https://simonwillison.net/2025/Oct/16/claude-skills/). After spending time with Skills this week, I think he\u2019s right.\n\n## What Took Me a While to Understand\n\nSkills are just markdown files. That sounds simple. Maybe too simple. How could these be any different than the other Markdown files that Claude provides such as /commands, agents, and the iconic CLAUDE.md?\n\nIt took me a bit to see why this is different. Skills aren\u2019t about hiding context like subagents do. They aren\u2019t prompts you explicitly invoke like slash commands. Skills are about discovery and determinism.\n\nClaude figures out when to use them. And when it does, it can leverage scripts and other tools to generate consistent, predictable output.\n\n## Where Skills Really Work\n\nSkills can reference scripts and other resources. Claude Code will actually run those scripts in your environment. That\u2019s powerful.\n\nThey work in Claude Desktop too, which is exciting. But there\u2019s a catch - no network access. Skills can\u2019t fetch from the internet or hit APIs when running in the desktop or web versions. Everything has to be internal.\n\nAt first, this feels limiting. And it is, for Claude Desktop and web. But in Claude Code? No limits. You can access commands on your system, run scripts that talk to APIs, do whatever you need. MCP is about external connections. Skills are about what\u2019s already there.\n\n## Forcing Myself to Learn Them\n\n\n\nI\u2019m in San Francisco this week for a company onsite. I was asked to give a presentation on AI or my workflow. I decided to talk about Skills because they were brand new, and I wanted to force myself to actually learn them.\n\nI made three skills:\n\n[**GPT-5 Consultant**](https://github.com/nicknisi/dotfiles/tree/main/home/.claude/skills/gpt5-consultant): I turned my existing agent into a skill. It felt like a better fit. A self-contained script that knows how to talk to the OpenAI API. Clean. No external MCP needed.\n\n[**Conference Talk Builder**](https://github.com/nicknisi/dotfiles/tree/main/home/.claude/skills/conference-talk-builder): This one lets me brain-dump talking points and conclusions into a proper outline that tells a complete story. No more staring at blank slides wondering how to structure my thoughts.\n\n[**Claude Code Analyzer**](https://github.com/nicknisi/dotfiles/tree/main/home/.claude/skills/claude-code-analyzer): This is the one I\u2019m most excited about. It looks at how you use Claude Code in a project. It highlights permissions you grant frequently and suggests which ones to auto-approve. It recommends commands and agents you might want to create, then finds real examples on GitHub so you can see how others built similar tools. It also analyzes your usage patterns and suggests optimizations.\n\n## The Real Advantage Over MCP\n\nThe barrier to entry is absurdly low. You start with a markdown file. That\u2019s it.\n\nYou experiment. You package it as a zip. You send it to colleagues. They can try it, modify it, extract value from it immediately. No complex tooling. No hosting. No distribution headaches.\n\nClaude ships with a skill creator skill. You describe what you want, and it builds the skill for you. Drop the zip into `.claude/skills` or drag it into Claude Desktop or web. Done.\n\nMCP is powerful for external integrations. But Skills meet you where you are, with what you already have.\n\n## What Happened After My Talk\n\nI presented these skills to the company. Showed why they\u2019re powerful. Demoed what I\u2019d built.\n\nShortly after, I got a Slack message:\n\n> Felt inspired by Nick Nisi\u2019s talk and created a design system Claude skill. It takes all content from the WorkDS pages and provides Claude with context on how to use certain components. Not all components are there, but I figure we can add to it and improve it over time.\n\nSomeone took the idea and ran with it. Built something useful for their workflow. In the time it took me to grab coffee.\n\nThat\u2019s the power of low barriers.\n\n## Where This Fits in My Workflow\n\nI\u2019ve written before about [my AI tooling setup](https://nicknisi.com/posts/ai-tooling/) and [coding with Claude Code](https://nicknisi.com/posts/coding-with-my-eyes-wide-shut/). Skills fill a gap I didn\u2019t realize existed.\n\nThey sit between the full power of MCP and the simplicity of just talking to Claude. You get structured, repeatable workflows without the overhead of building external tools. That\u2019s exactly where I need them to be.\n\n## Try This\n\nEveryone should experiment with Skills. The approachability is the feature. Start with a markdown file and see what happens.\n\nAs Claude adds features, Skills will get more powerful. But they\u2019re already useful right now. You don\u2019t need to wait for the perfect use case. Make something small. See where it takes you.\n\n## 2 likes\n\n[](https://bsky.app/profile/jbranchaud.bsky.social)[](https://bsky.app/profile/drk.wtf)\n\n### Comments\n\n[2024 Review - A Year of Growth and Change](https://nicknisi.com/posts/2024)\n\n\n\n## Nick Nisi\n\nA passionate TypeScript enthusiast, podcast host, and dedicated community builder.\n\nFollow me on\n[Bluesky](https://bsky.app/profile/nicknisi.com).",

- "title": null,

- "description": "Claude Skills are the approachable AI tool I didn't know I needed.\n",

- "fetched_at": "2025-12-12T13:07:52.778261Z",

- "source_name": "Lee Han Chung",

- "source_url": "https://leehanchung.github.io",

- "relevant_keyword": "claude"

- },

- {

- "name": "Enterprise AI Transformation: The 4-Set Framework for IT 3.0",

- "url": "https://leehanchung.github.io/blogs/2025/09/19/bullshit-jobs/",

- "type": "article",

- "status": "success",

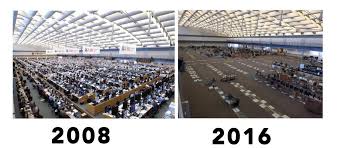

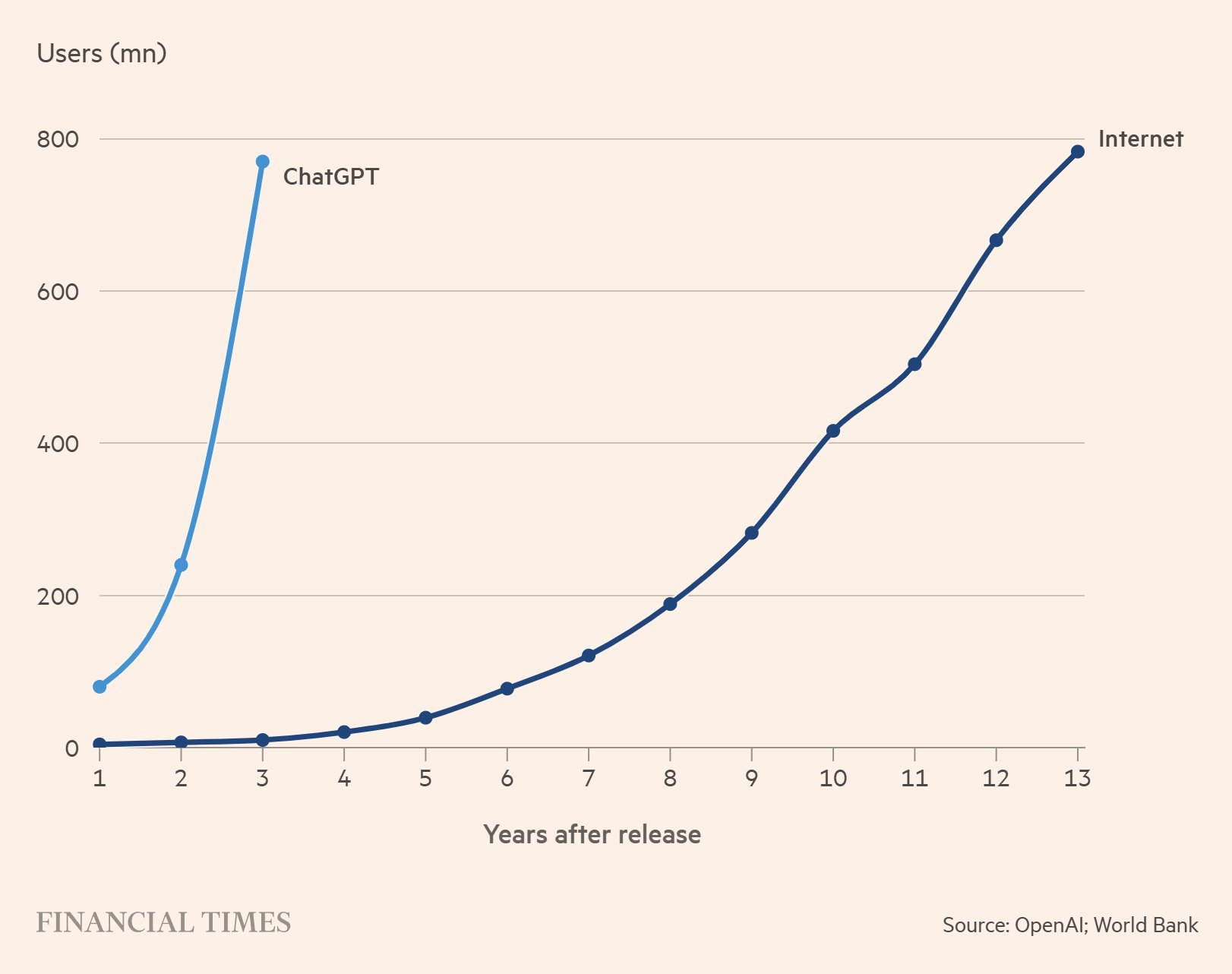

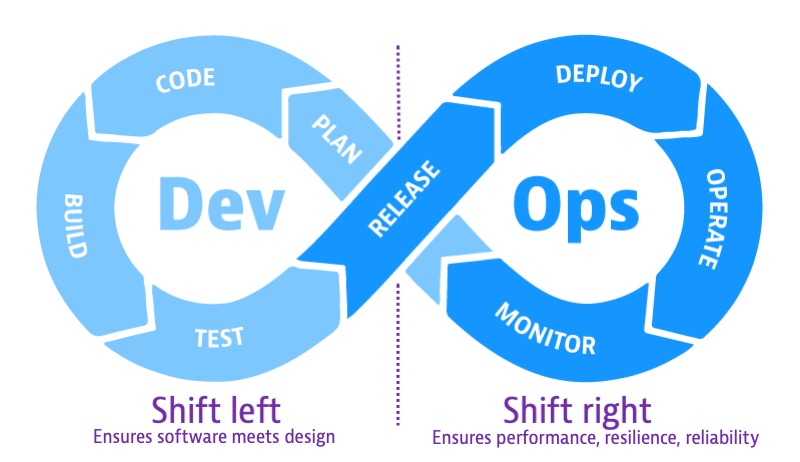

- "content": "If you\u2019re leading enterprise transformation, you need to understand what\u2019s happening to your workforce, your tech stack, and your competitors right now. Three waves of enterprise IT have systematically created and destroyed entire job categories. The third wave is accelerating, and it will reshape your organization whether you\u2019re ready or not.\n\n- **IT 1.0** built careers around custom in-house software. IT departments were strategic assets staffed with programmers and system operators who created bespoke systems mapping human processes to software workflows.\n- **IT 2.0** outsourced that intelligence to hundreds of SaaS solutions, creating a fragmented modular stack that spawned an entire ecosystem of \u201cglue work\u201d roles \u2013 SaaS admins, implementation engineers, system integrators, data analysts reconciling mismatched systems. Anthropologist David Graeber called these **\u201cbullshit jobs\u201d**: work that exists mainly because systems can\u2019t talk to each other.\n- **IT 3.0** is dissolving this glue layer with AI-native systems and agents that can draft, coordinate, and produce outcomes without predefined workflows. The **bullshit jobs of IT 2.0 are first on the chopping block**. Just as CAD tools erased armies of draftsmen, or UBS\u2019s trading automations emptied an entire stadium of trading floor in Stamford in 2012, today\u2019s AI agents are already hollowing out sales ops, recruiting coordinators, junior devs, and SaaS admins.\n\nYour strategic decisions today determine whether you\u2019re building the next wave of infrastructure or clinging to a dying paradigm. Here\u2019s what you need to know.\n\n# From IT 1.0 to IT 3.0\n\n## IT 1.0 \u2013 Building and Owning the Stack\n\nBefore the Internet and cloud computing, enterprises staffed full IT departments and procured from software vendors like IBM, Oracle, and SAP to build and run in-house systems. These were expensive and specialized, but tightly integrated with the business. This software translated existing human processes into workflows defined in bespoke software living on top of a database.\n\nIT departments were core strategic assets, staffed with programmers, system operators, and managers who created bespoke systems. Jobs in this era were directly tied to creating or maintaining foundational business infrastructure. This period gave us foundational software development methodologies like Agile, born from Chrysler\u2019s internal payroll system project. The work was complex and expensive, but it was essential.\n\n## IT 2.0 \u2013 SaaS and the Bullshit Job Boom\n\nThe 2000s marked the era of SaaS, with software now delivered over the Internet. Companies shifted from building internally to subscribing. This democratized access to powerful tools like cloud-based CRMs, HR suites, and ERPs. This enabled consumption-based pricing and product-led growth business models. At the same time, this created a new systemic problem: a fragmented modular stack of hundreds of applications that are siloed. Data silos and operational silos emerged everywhere, with Excel files pushed via email to glue everything together.\n\nThis fragmentation gave birth to a massive ecosystem of what David Graeber termed \u201c [bullshit jobs](https://strikemag.org/bullshit-jobs/)\u201d \u2013 roles that exist primarily to service the friction between systems. These aren\u2019t jobs that create direct value; they exist to pay the \u201cinformation organization tax.\u201d Duct tapers patch half-working systems together with shoddy code or send Excel files via email. Box tickers send Excel sheets to half a dozen folks for check-off, creating the appearance that something productive is being done when it is not.\n\nThis boom in \u201cglue work\u201d included:\n\n- System Administrators: Entire careers built around configuring, managing, and patching platforms like Salesforce or Workday.\n- System Integrators: Specialists whose job was to connect one SaaS tool to another and migrate data between them.\n- Data Entry & Junior Analysts: Armies of people hired to manually move data from spreadsheets and PDFs into rigid SaaS formats.\n- Operations Roles (Sales Ops, HR Ops, Rev Ops): Professionals who spend their days coordinating approvals, managing handoffs, and bridging gaps that APIs and integrations never quite solved.\n\nGraeber\u2019s notion of **bullshit jobs** became reality: people spending careers moving data from one rectangle on their monitor to another. This wasn\u2019t work in the economic sense of creating value \u2013 it was a side effect of SaaS modularity and weak interoperability.\n\nThe **information organization tax** was massive. Meetings, cross-departmental handoffs, redundant reporting \u2013 all to coordinate intelligence scattered across silos.\n\nThis era also saw the rise of **IT Consulting and Outsourcing**, with inherent misaligned incentives. Shoddy software was developed to maximize overall contract value, including system integration, data migration, and ongoing maintenance contract renewals. The now-hollowed-out IT departments were no longer technical enough to ensure quality of work. [Boeing\u2019s outsourcing of its software design through layers of sub-contracting was the biggest showcase of this failure.](https://spectrum.ieee.org/how-the-boeing-737-max-disaster-looks-to-a-software-developer)\n\nThis entire industry \u2014 admins, ops, consultants \u2014 was built on a foundation of systems that couldn\u2019t talk to each other. AI is now removing that foundation.\n\n## IT 3.0 \u2013 AI Dissolves the Glue\n\n**The opportunity**: Organizations that transforms first will operate with half the headcount and twice the velocity of their competitors.\n\nThe AI-native wave is fundamentally different. Instead of creating more silos, AI agents and copilots are dissolving the \u201cglue\u201d that holds the fragmented IT 2.0 stack together. These systems can draft workflows, translate data between formats, and execute complex processes across multiple tools without human intervention or mediation.\n\nJust as **CAD tools** turned hundreds of draftsmen into a handful of designers with software, or as **UBS shuttered its massive Stamford, Connecticut trading floor in 2012** after algorithmic trading made human traders redundant, AI is now dismantling the SaaS-created glue layer. All the workflow definitions and playbooks are becoming obsolete and will be codified within the model and tools.\n\n\n\n> IT 3.0 software now requires environments and infrastructure for agents to operate, instead of translating human-centric processes from the 1900s into workflows \u2013 the era of agent-computer interfaces.\n\nThese roles are being eliminated now, not in some distant future:\n\n- **SaaS admins** \u2192 AI copilots auto-generate workflows, reports, and integrations. If you\u2019re still hiring Salesforce admins, you\u2019re building the wrong team.\n- **Recruiting coordinators** \u2192 Chatbots already schedule interviews and screen resumes. This role has maybe 18 months left.\n- **Entry-level developers** \u2192 Code assistants handle glue code and CRUD apps. Your hiring funnel should reflect this.\n- **Sales ops & BDRs** \u2192 AI personalizes outreach and processes leads at volume. Manual outreach doesn\u2019t scale anymore.\n- **Finance & HR ops** \u2192 AI reconciles invoices, updates HR records, and generates compliance docs. Every manual handoff is a liability.\n\nThe changes ars accelerating because the barriers to adoption are collapsing. IT 1.0 required massive capital expenditure and months of implementation. IT 2.0 reduced cost but still required lengthy training and change management. IT 3.0 collapses time to value: it takes seconds to issue commands to ChatGPT instead of months training super users on PeopleSoft for adminstrators or EPIC for healthcare. When adoption barriers disappear, displacement accelerates.\n\n### Which IT 2.0 Tools Are Most at Risk?\n\nAI will hit hardest where SaaS tools created **clerical overhead**:\n\n- **CRM (Salesforce, HubSpot)** \u2013 lead enrichment, pipeline updates, report generation: all ripe for AI automation.\n- **ATS & HR platforms (Workday, Greenhouse)** \u2013 resume parsing, candidate scheduling, payroll entry: trivial for AI.\n- **Customer support platforms (Zendesk, ServiceNow)** \u2013 tier-1 support is already being offloaded to LLM agents.\n- **Project management (Asana, Jira, Monday)** \u2013 task creation, updates, and cross-tool syncing will be handled by AI copilots, reducing the need for ops roles.\n- **Finance/ERP (NetSuite, SAP)** \u2013 invoice matching, expense categorization, forecasting: automatable.\n\nThe SaaS platforms may survive, but the **job ecosystems around them** will not.\n\n# AI Transformations\n\nOne of the biggest challenges in AI transformation is how we measure. Poorly defined metrics will incentivize the wrong behavior.\n\nTake engineering as an example:\n\n- Lines of code written by AI\n- Weekly active users on Cursor\n- Percentage of PRs reviewed by AI\n\nThese metrics measures only adoption and utilization of AI tools and incentivize activity. These measures activities and outputs, not impacts and outcomes. People are equipped with AI tools but operates the same way as they did before ChatGPT. Cargo-cult AI transformation.\n\nWhat actually matters and should be tracked are:\n\n- **Product lead time:** from PM idea inception to production.\n- **Ticket resolution time:** Time to resolve request and support tickets\n- **Change fail percentage:** how often do your deployments blow up and require hotfixes.\n\nThese aren\u2019t novel ideas; they\u2019re adapted from [DORA metrics](https://dora.dev/guides/dora-metrics-four-keys/). But implementing them requires serious platform investment in analytics and observability.\n\nAnd if we\u2019re being realistic, most engineering hours aren\u2019t spent building features. They\u2019re occupied by operational overhead and enterprise architecture complexity, with interdependencies between services, org silos, and coordination tax. The microservices dream turned into a distributed monolith nightmare.\n\n\n\nThis problem isn\u2019t unique to engineering. Every function needs to figure out what velocity means for them, and the answers are completely different. Legal measures contracts processed per month, not contracts reviewed\u2014velocity over volume in progress. Marketing tracks campaign velocity, concept to launch\u2014how fast can you test and iterate? Sales optimization is deal cycle time, qualified lead to close, not pipeline size. Finance cares about close cycle time and days to financial insights. Same transformation, completely different metrics.\n\nThis is why centralizing AI transformation is very hard to do well. Many companies are setting up Chief AI Officers and AI Enablement Engineering Teams to \u201cmanage\u201d the IT 3.0 shift. This creates exactly the wrong dynamic. One overwhelmed function while everyone else waits for direction and navigates bureaucracy. You end up with coordination overhead on top of your existing coordination overhead.\n\nFor companies actually making the IT 3.0 transition work, every executive owns AI transformation for their domain. Legal, finance, marketing, sales, engineering. Each has dedicated teams and executive accountability to transform their function from within. This is a business transformation, not IT transformation. The Chief AI Officer org chart is IT 2.0 thinking applied to an IT 3.0 problem.\n\n## Measuring AI Transformation with 4-Sets Framework\n\nTo measure AI Transformation, we can leverage the 4-Sets Framework used in the early days of Big Data - mindset, toolset, skillset, and datasets. This helps us to analyze,\n\n### Mindset: Getting Comfortable with Probabilistic Systems\n\nAI is fundamentally different from deterministic software. Same input, different outputs. \u201cCorrect\u201d is contextual, not binary. This breaks every QA process and approval workflow designed for deterministic systems. When AI works 95% of the time, quality control becomes exponentially harder. Most organizations can\u2019t get comfortable with \u201c95% accurate\u201d instead of \u201c100% correct.\u201d\n\nThe hardest concept for product teams to grasp is that AI makes shiny demos trivially easy and production deployment brutally hard. Compelling demos get built in days. Production at scale\u2014with acceptable error rates, latency, and cost\u2014takes months. The gap between \u201cit works in the demo\u201d and \u201cit works at 10 requests per second\u201d is where most AI projects die. OpenAI built their [Agent Builder in 6 weeks](https://x.com/NoahEpstein_/status/1975982846163464418) with primitive user experiences. This made investors realize that n8n is the actual category leader and allows it to [raised 180mat2.5B valuation](https://blog.n8n.io/series-c/).\n\nThis requires a cultural shift most companies aren\u2019t prepared for. You have to allow failures to happen. Innovation requires experimentation. Experimentation requires accepting failure. If your culture punishes failed AI experiments the same way it punishes product failures or production outages, nobody will innovate. There will be theater. Cargo-cult AI adoption where engineers use Cursor exactly like they use VSCode. Weekly active users up, changes in productivity flat. All form, no function. The shift from \u201czero defects\u201d culture to \u201cfast iteration\u201d culture is the hardest change to make, and it\u2019s the one that determines whether transformation succeeds or becomes expensive theater.\n\n### Toolset: Give Your People AI, Then Get Out of the Way\n\nThe toolset question isn\u2019t \u201cwhat should we build?\u201d It\u2019s \u201cwhat do we give employees so they can experiment?\u201d Enable the early adopters. Most organizations approach this backwards. They lock down AI access while forming committees to \u201cevaluate use cases.\u201d By the time the committee finishes, your competitors have six months of experimentation learning ahead of you. GPT 3.5 has now became GPT 4, and you just wasted one full generation of AI progress. Just look at the adoption curve and its not hard to realize that one day in AI is seven days in software. The progress of AI research and engineering is the hyperbolic time chamber in Dragon Ball.\n\n\n\nStart with access. Enterprise licenses for Claude, ChatGPT, coding assistants like Cursor or Windsurf. The ROI comes from letting your team discover what works instead of trying to predict it from a conference room.\n\nRemove approval bureaucracy for internal tools. If an engineer wants to try auto-generating test cases, or marketing wants to experiment with campaign copy variations, they shouldn\u2019t need VP sign-off. Create guardrails \u2014 what data can\u2019t leave the organization, what decisions need human review \u2014 then let teams iterate within those boundaries. The organizations winning at this have clear rules and fast iteration, not slow approvals and perfect safety.\n\nThe infrastructure and platform shift is fundamental. You need environments where AI can actually operate \u2014 API access to internal systems, data pipelines that AI can query, workflows that can be triggered programmatically. If your systems only work through web UIs and manual clicking, AI can\u2019t help much. This doesn\u2019t mean rebuilding everything overnight, but it does mean every new system should be designed for programmatic access first, human interfaces second. Build for agent compute interfaces.\n\nBuild safe sandboxes. The real blocker for AI is that nobody can access production data to try things. Create environments with representative data where people can experiment without going through 20 levels of approval or risking compliance violations. Sanitized customer data, recent transaction samples, realistic test cases. Make it real enough to be useful, safe enough to be accessible.\n\n### Skillset: Developing AI Literacy in Your Existing Workforce\n\nThe skillset question is challenging. It\u2019s not who should we hire, but how do we develop our existing people. There\u2019s institutional knowledge and domain expertise sitting in the current workforce. Replacing them with AI-native hires means throwing away context that took years to build.\n\nStart with AI literacy training, but make it practical. Nobody needs another 30 minutes video on \u201cwhat is generative AI.\u201d They need hands-on practice using AI tools for their actual work. Give your legal team access to contract analysis tools and let them discover what works. Let your sales ops team experiment with lead scoring. Let engineers try code generation on real tickets. Learning happens through doing, not watching presentations.\n\nThe ratio of architects to operators is inverting. You need more people who can define what should be automated and govern how it operates, fewer people executing repetitive workflows. Some of your SaaS admins can become platform engineers if you invest in their development. Some of your operations coordinators can become exception handlers and strategic decision-makers. But this requires intentional churning and upskilling, not just telling people to \u201clearn AI.\u201d\n\nDevOps taught us the \u201cshift left\u201d movement\u2014pushing responsibility to development teams instead of operations teams. IT 3.0 accelerates this dramatically. You need platform engineers building infrastructure that enables AI to operate, not program managers coordinators managing handoffs between silos.\n\n\n\nRetrain when someone has deep domain knowledge but outdated execution skills. For example, retrain that SaaS admin who knows every corner case in your business rules. Restructure when the role itself is pure coordination overhead with no domain expertise, e.g., data entry coordinators, implementation engineers patching systems together, junior developers writing glue code.\n\nThe roles that survive require one of three things: deep technical expertise (machine learning engineers, platform engineers, infrastructure architects), deep context and judgment (exception handlers, strategic decision-makers), or genuine human connection (relationship building, complex negotiation, empathy-driven work). Everything else is getting automated, and your workforce development strategy needs to account for this reality instead of pretending you can train your way around it.\n\n### Dataset: The Enterprise Data Reality Nobody Wants to Talk About\n\nHere\u2019s the uncomfortable truth: most enterprises have tons of data and almost none of it is useable for AI reasoning. SaaS work in silos - Salesforce can be run without talking to Workday with humans bridging the gaps. AI can\u2019t. Reasoning engines need comprehensive cross-functional context to make decisions, and your data is scattered across dozens of systems with inconsistent schemas, undocumented business logic, and quality issues nobody has prioritized fixing because \u201cit works fine for reporting.\u201d\n\nThe gap between \u201cwe have the data\u201d and \u201cAI can reason with our data\u201d is measured in quarters or years. You need historical decision rationale, not just transaction logs. Relationship graphs between entities, not just foreign keys. Temporal context showing why things changed over time. Cross-functional workflows documenting how sales, legal, and finance actually interact, not the idealized process in the wiki nobody updates.\n\nThis is unglamorous infrastructure work that doesn\u2019t demo well but blocks everything else. Data quality becomes infrastructure, not a nice-to-have. Someone needs to own making datasets useable, not just available. Start with safe sandboxes where teams can experiment with representative production data without 20 levels of approval. Prove value with sanitized data, then earn access to more sensitive datasets through results, not presentations.\n\nBuild infrastructure that enables experimentation without exposure. Clear governance and guardrails on what data can\u2019t leave the organization and what decisions need human review, then let teams move fast within those boundaries. Companies that solve this first will have compounding advantages as their AI systems get smarter from accumulated context while competitors are still filling out data access request forms.\n\n## The Strategic Warning: Don\u2019t Create AI Slop Janitors\n\nRushing to implement AI without redesigning workflows creates worse jobs than the ones you\u2019re eliminating.\n\nWhile AI eliminates IT 2.0\u2019s glue work, poorly implemented AI is creating its own category of bullshit jobs: **AI Slop Janitors**. This happens when organizations bolt AI onto existing processes instead of rebuilding from first principles.\n\nLook at the content industry: writers who once led creative teams now edit ChatGPT\u2019s robotic prose for 1-5 cents per word (versus 10+ cents for original writing). They fix the same formulaic mistakes daily \u2013 removing \u201cdelve\u201d and \u201cnevertheless,\u201d fact-checking hallucinations, making text sound less awkward. The absurdity peaks when freelance platforms use AI detectors while simultaneously hiring people to make AI text undetectable. [Human workers are being brought in to fix what AI gets wrong.](https://www.nbcnews.com/tech/tech-news/humans-hired-to-fix-ai-slop-rcna225969)\n\nThis pattern is emerging across industries:\n\n- **AI Tutors** teaching LLMs to write better, e.g., [xAI Presentation and Writing Tutor](https://job-boards.greenhouse.io/xai/jobs/4879785007)\n- **Customer service** bots requiring constant human backup for edge cases\n- **Engineering teams** spending more time fixing AI-generated code than writing it themselves, e.g., [The era of AI Slop cleanup has begun](https://www.reddit.com/r/ExperiencedDevs/comments/1mg2r6y/the_era_of_ai_slop_cleanup_has_begun/)\n- **\u201cAutonomous\u201d vehicles** with remote human operators standing by, e.g., [Waymo\u2019s Fleet Response Team](https://waymo.com/blog/2024/05/fleet-response)\n\nThese aren\u2019t valuable human-in-the-loop systems. They are temporary workers thats used to clean up AI\u2019s mess, and once AI learns these, these jobs will be replaced. Organizations creating these roles are wasting capital on the wrong side of the transition. If your AI implementation plan includes hiring \u201cAI quality reviewers\u201d or \u201cAI content editors,\u201d you\u2019re implementing AI wrong.\n\n## Conclusion\n\nThe transition from IT 2.0 to IT 3.0 is messy and accelerating. The glue work jobs are disappearing whether you\u2019re ready or not. But the replacements aren\u2019t automatically better \u2013 poorly implemented AI creates worse bullshit jobs than the ones being eliminated. AI Slop Janitors stare into the abyss and fix the same robotic mistakes or the same vibe coded apps or workflows.\n\nOrganizations that move decisively will operate with half the headcount and twice the velocity of their competitors. Those that don\u2019t will find themselves either:\n\n1. Carrying dead weight in IT 2.0 roles while competitors move faster\n2. Creating AI Slop Janitor positions because they bolted AI onto broken processes\n3. Disrupted entirely by AI-native competitors who rebuilt from first principles\n\n**The opportunity is real, but narrow.** As AI eliminates the information organization tax, capital and talent can shift to work that genuinely requires human judgment, deep context, and strategic thinking. But this shift won\u2019t happen organically \u2013 it requires deliberate choices about team structure, workflow redesign, and where to compete.\n\n**Your organizational priorities:**\n\n- Assess org maturity using the 4-Sets transformation framework: Mindset, Dataset, Toolset, Skillset\n- Redesign workflows from first principles for AI, not bolting AI onto existing processes\n- Distribute AI ownership across functions \u2013 every team owns their domain\u2019s transformation\n- Invest in platform engineering and AI infrastructure, not more ops coordinators\n- Build safe sandbox environments for experimentation without approval bureaucracy\n\nThe bullshit jobs aren\u2019t disappearing \u2013 they\u2019re being replaced by different bullshit jobs. The question is whether you\u2019re building the infrastructure that eliminates them, or whether you\u2019re creating the next generation of make-work. Choose fast, because your competitors already are.\n\n## [References](https://leehanchung.github.io/blogs/2025/09/19/bullshit-jobs/\\#references)\n\n### Articles & Blog Posts\n\n- [Bullshit Jobs](https://strikemag.org/bullshit-jobs/) \\- David Graeber\u2019s original essay on bullshit jobs\n- [How the Boeing 737 Max Disaster Looks to a Software Developer](https://spectrum.ieee.org/how-the-boeing-737-max-disaster-looks-to-a-software-developer) \\- Analysis of Boeing\u2019s software outsourcing failure\n- [Service as Software](https://leehanchung.github.io/blogs/2024/02/01/service-as-software/) \\- On delivering outcomes through AI agents\n- [AI Impact on Software](https://leehanchung.github.io/blogs/2024/08/30/ai-impact-software/) \\- Cost to Implement, Time to Implement, and Time to Utility\n- [Humans Hired to Fix AI Slop](https://www.nbcnews.com/tech/tech-news/humans-hired-to-fix-ai-slop-rcna225969) \\- NBC News on AI cleanup jobs\n- [The Era of AI Slop Cleanup Has Begun](https://www.reddit.com/r/ExperiencedDevs/comments/1mg2r6y/the_era_of_ai_slop_cleanup_has_begun/) \\- Discussion on AI code cleanup\n- [Waymo\u2019s Fleet Response Team](https://waymo.com/blog/2024/05/fleet-response) \\- Human operators for self-driving cars\n\n### Job Postings\n\n- [xAI Presentation and Writing Tutor](https://job-boards.greenhouse.io/xai/jobs/4879785007) \\- Example of AI tutor positions\n\n```\n@article{\n leehanchung_bullshit_jobs,\n author = {Lee, Hanchung},\n title = {The End of \"Bullshit Jobs\": From IT 1.0 to the AI-Powered 3.0 Era},\n year = {2025},\n month = {09},\n day = {19},\n howpublished = {\\url{https://leehanchung.github.io}},\n url = {https://leehanchung.github.io/blogs/2025/09/19/bullshit-jobs/}\n}\n```",

- "title": "Enterprise AI Transformation: The 4-Set Framework for IT 3.0",

- "description": "How to transform from IT 2.0 SaaS to AI-native systems. Eliminate bullshit jobs, avoid AI Slop Janitors. Framework for executives leading IT 3.0 adoption.",

- "fetched_at": "2025-12-12T13:07:52.972848Z",

- "source_name": "Lee Han Chung",

- "source_url": "https://leehanchung.github.io",

- "relevant_keyword": "claude"

- },

- {

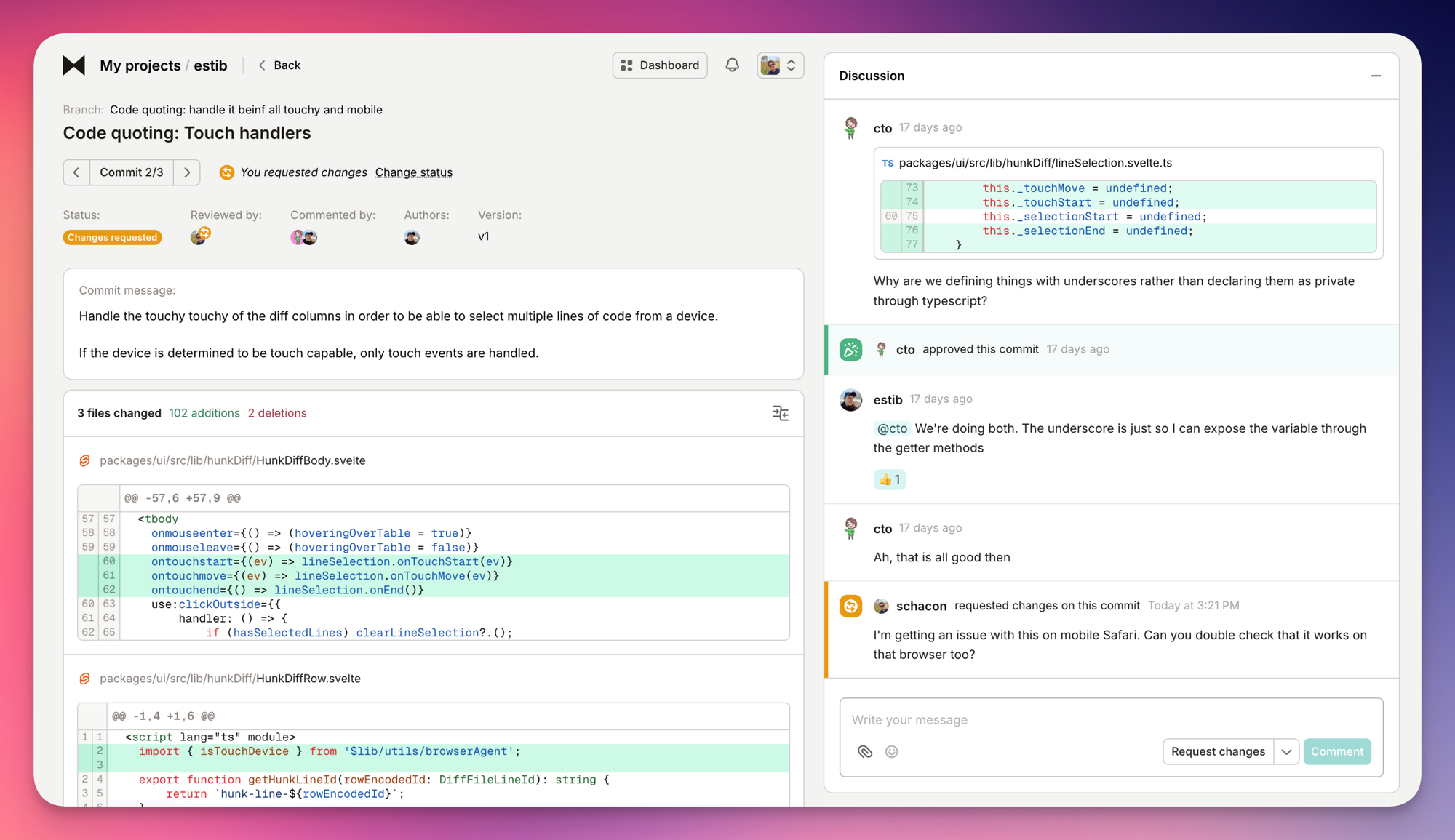

- "name": "How I Use Every Claude Code Feature",

- "url": "https://blog.sshh.io/p/how-i-use-every-claude-code-feature",

- "type": "article",

- "status": "success",

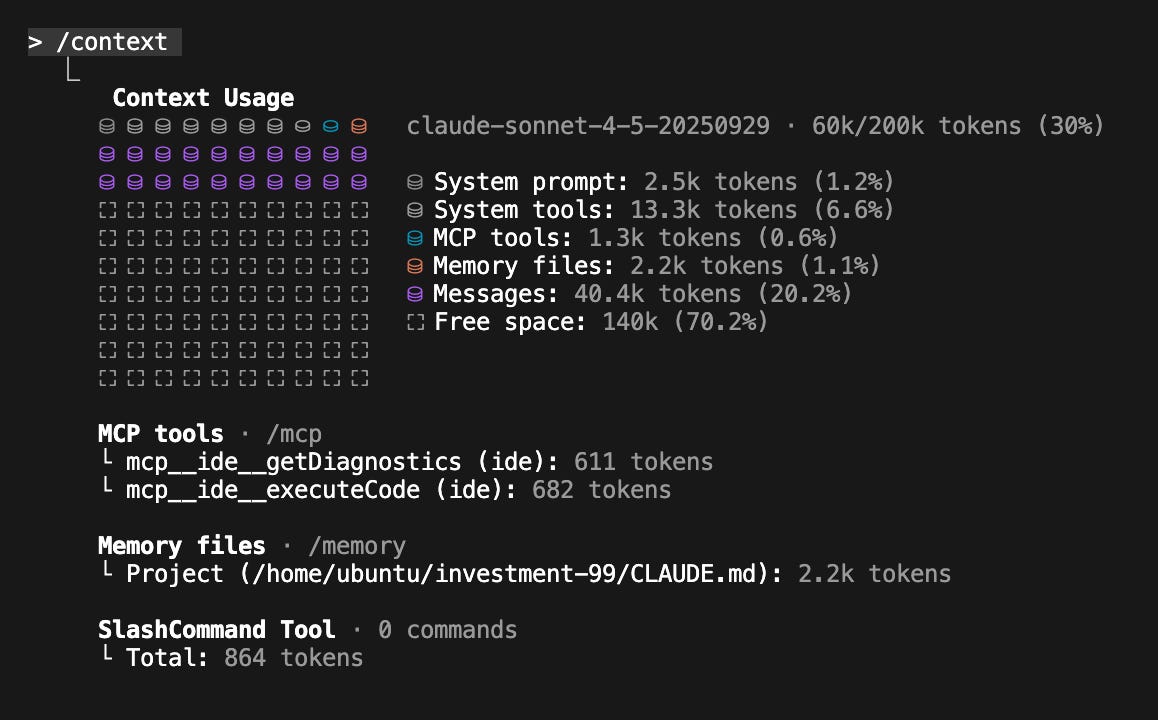

- "content": "[](https://blog.sshh.io/)\n\n# [Shrivu\u2019s Substack](https://blog.sshh.io/)\n\nSubscribeSign in\n\n\n\nDiscover more from Shrivu\u2019s Substack\n\nA personal blog on AI, software engineering, and cybersecurity.\n\nOver 2,000 subscribers\n\nSubscribe\n\nBy subscribing, I agree to Substack's [Terms of Use](https://substack.com/tos), and acknowledge its [Information Collection Notice](https://substack.com/ccpa#personal-data-collected) and [Privacy Policy](https://substack.com/privacy).\n\nAlready have an account? Sign in\n\n# How I Use Every Claude Code Feature\n\n### A brain dump of all the ways I've been using Claude Code.\n\n[](https://substack.com/@shrivu)\n\n[Shrivu Shankar](https://substack.com/@shrivu)\n\nNov 01, 2025\n\n238\n\n21\n\n16\n\nShare\n\nArticle voiceover\n\n0:00\n\n-19:59\n\nAudio playback is not supported on your browser. Please upgrade.\n\nI use Claude Code. A lot.\n\nAs a hobbyist, I run it in a VM several times a week on side projects, often with `--dangerously-skip-permissions` to vibe code whatever idea is on my mind. Professionally, part of my team builds the AI-IDE rules and tooling for our engineering team that consumes _several billion tokens per month_ just for codegen.\n\nThe CLI agent space is getting crowded and between Claude Code, Gemini CLI, Cursor, and Codex CLI, it feels like the real race is between Anthropic and OpenAI. But TBH when I talk to other developers, their choice often comes down to what feels like superficials\u2014a \u201clucky\u201d feature implementation or a system prompt \u201cvibe\u201d they just prefer. At this point these tools are all pretty good. I also feel like folks often also over index on the output style or UI. Like to me the \u201cyou\u2019re absolutely right!\u201d sycophancy isn\u2019t a notable bug; it\u2019s a signal that you\u2019re too in-the-loop. Generally my goal is to \u201cshoot and forget\u201d\u2014to delegate, set the context, and let it work. Judging the tool by the final PR and not how it gets there.\n\nHaving stuck to Claude Code for the last few months, this post is my set of reflections on Claude Code\u2019s entire ecosystem. We\u2019ll cover nearly every feature I use (and, just as importantly, the ones I don\u2019t), from the foundational `CLAUDE.md` file and custom slash commands to the powerful world of Subagents, Hooks, and GitHub Actions. **This post ended up a bit long and I\u2019d recommend it as more of a reference than something to read in entirety.**\n\n## [CLAUDE.md](https://www.anthropic.com/engineering/claude-code-best-practices)\n\nThe single most important file in your codebase for using Claude Code effectively is the root `CLAUDE.md`. This file is the agent\u2019s \u201cconstitution,\u201d its primary source of truth for how your specific repository works.\n\nHow you treat this file depends on the context. For my hobby projects, I let Claude dump whatever it wants in there.\n\nFor my professional work, our monorepo\u2019s `CLAUDE.md` is strictly maintained and currently sits at 13KB (I could easily see it growing to 25KB).\n\n- It only documents tools and APIs used by 30% (arbitrary) or more of our engineers (else tools are documented in product or library specific markdown files)\n\n- We\u2019ve even started allocating effectively a max token count for each internal tool\u2019s documentation, almost like selling \u201cad space\u201d to teams. If you can\u2019t explain your tool concisely, it\u2019s not ready for the `CLAUDE.md`.\n\n\n#### Tips and Common Anti-Patterns\n\nOver time, we\u2019ve developed a strong, opinionated philosophy for writing an effective `CLAUDE.md`.\n\n1. **Start with Guardrails, Not a Manual.** Your `CLAUDE.md` should start small, documenting based on what Claude is getting wrong.\n\n2. **Don\u2019t**`@` **-File Docs.** If you have extensive documentation elsewhere, it\u2019s tempting to `@`-mention those files in your `CLAUDE.md`. This bloats the context window by embedding the entire file on every run. But if you just _mention_ the path, Claude will often ignore it. You have to _pitch_ the agent on _why_ and _when_ to read the file. \u201cFor complex \u2026 usage or if you encounter a `FooBarError`, see `path/to/docs.md` for advanced troubleshooting steps.\u201d\n\n3. **Don\u2019t Just Say \u201cNever.\u201d** Avoid negative-only constraints like \u201cNever use the `--foo-bar` flag.\u201d The agent will get stuck when it thinks it _must_ use that flag. Always provide an alternative.\n\n4. **Use**`CLAUDE.md` **as a Forcing Function.** If your CLI commands are complex and verbose, don\u2019t write paragraphs of documentation to explain them. That\u2019s patching a human problem. Instead, write a simple bash wrapper with a clear, intuitive API and document _that_. Keeping your `CLAUDE.md` as short as possible is a fantastic forcing function for simplifying your codebase and internal tooling.\n\n\nHere\u2019s a simplified snapshot:\n\n```\n# Monorepo\n\n## Python\n- Always ...\n- Test with <command>\n... 10 more ...\n\n## <Internal CLI Tool>\n... 10 bullets, focused on the 80% of use cases ...\n- <usage example>\n- Always ...\n- Never <x>, prefer <Y>\n\nFor <complex usage> or <error> see path/to/<tool>_docs.md\n\n...\n```\n\nFinally, we keep this file synced with an `AGENTS.md` file to maintain compatibility with other AI IDEs that our engineers might be using.\n\n_If you are looking for more tips for writing markdown for coding agents see [\u201cAI Can\u2019t Read Your Docs\u201d,](https://blog.sshh.io/p/ai-cant-read-your-docs) [\u201cAI-powered Software Engineering\u201d,](https://blog.sshh.io/p/ai-powered-software-engineering) and [\u201cHow Cursor (AI IDE) Works\u201d.](https://blog.sshh.io/p/how-cursor-ai-ide-works)_\n\n**The Takeaway:** Treat your `CLAUDE.md` as a high-level, curated set of guardrails and pointers. Use it to guide where you need to invest in more AI (and human) friendly tools, rather than trying to make it a comprehensive manual.\n\nThanks for reading Shrivu\u2019s Substack! Subscribe for free to receive new posts and support my work.\n\nSubscribe\n\n## [Compact, Context, & Clear](https://www.reddit.com/r/ClaudeAI/comments/1lk2oay/compact_and_continue_or_clear_and_start_again/)\n\nI recommend running `/context` mid coding session at least once to understand how you are using your 200k token context window (even with Sonnet-1M, I don\u2019t trust that the full context window is actually used effectively). For us a fresh session in our monorepo costs a baseline ~20k tokens (10%) with the remaining 180k for making your change \u2014 which can fill up quite fast.\n\n[](https://substackcdn.com/image/fetch/$s_!o_oM!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F7ee93292-646a-407a-95da-d469be81002e_1158x720.png) A screenshot of **/context** in one of my recent side projects. You can almost think of this like disk space that fills up as you work on a feature. After a few minutes or hours you\u2019ll need to clear the messages (purple) to make space to continue.\n\nI have three main workflows:\n\n- `/compact` **(Avoid):** I avoid this as much as possible. The automatic compaction is opaque, error-prone, and not well-optimized.\n\n- `/clear` **\\+**`/catchup` **(Simple Restart):** My default reboot. I `/clear` the state, then run a custom `/catchup` command to make Claude read all changed files in my git branch.\n\n- \u201cDocument & Clear\u201d **(Complex Restart):** For large tasks. I have Claude dump its plan and progress into a `.md`, `/clear` the state, then start a new session by telling it to read the `.md` and continue.\n\n\n**The Takeaway:** Don\u2019t trust auto-compaction. Use `/clear` for simple reboots and the \u201cDocument & Clear\u201d method to create durable, external \u201cmemory\u201d for complex tasks.\n\n## [Custom Slash Commands](https://docs.claude.com/en/docs/claude-code/slash-commands)\n\nI think of slash commands as simple shortcuts for frequently used prompts, nothing more. My setup is minimal:\n\n- `/catchup`: The command I mentioned earlier. It just prompts Claude to read all changed files in my current git branch.\n\n- `/pr`: A simple helper to clean up my code, stage it, and prepare a pull request.\n\n\nIMHO if you have a long list of complex, custom slash commands, you\u2019ve created an anti-pattern. To me the entire point of an agent like Claude is that you can type _almost_ whatever you want and get a useful, mergable result. The moment you force an engineer (or non-engineer) to learn a new, documented-somewhere list of essential magic commands just to get work done, you\u2019ve failed.\n\n**The Takeaway:** Use slash commands as simple, personal shortcuts, not as a replacement for building a more intuitive `CLAUDE.md` and better-tooled agent.\n\n## [Custom Subagents](https://docs.claude.com/en/docs/claude-code/sub-agents)\n\nOn paper, custom subagents are Claude Code\u2019s most powerful feature for context management. The pitch is simple: a complex task requires `X` tokens of input context (e.g., how to run tests), accumulates `Y` tokens of working context, and produces a `Z` token answer. Running `N` tasks means `(X + Y + Z) * N` tokens in your main window.\n\nThe subagent solution is to farm out the `(X + Y) * N` work to specialized agents, which only return the final `Z` token answers, keeping your main context clean.\n\nI find they are a powerful idea that, in practice, _custom_ subagents create two new problems:\n\n1. **They Gatekeep Context:** If I make a `PythonTests` subagent, I\u2019ve now hidden all testing context from my _main_ agent. It can no longer reason holistically about a change. It\u2019s now forced to invoke the subagent just to know how to validate its own code.\n\n2. **They Force Human Workflows:** Worse, they force Claude into a rigid, human-defined workflow. I\u2019m now dictating _how_ it must delegate, which is the very problem I\u2019m trying to get the agent to solve for me.\n\n\nMy preferred alternative is to use Claude\u2019s built-in `Task(...)` feature to spawn clones of the _general_ agent.\n\nI put all my key context in the `CLAUDE.md`. Then, I let the _main agent_ decide when and how to delegate work to copies of itself. This gives me all the context-saving benefits of subagents without the drawbacks. The agent manages its own orchestration dynamically.\n\nIn my [\u201cBuilding Multi-Agent Systems (Part 2)\u201d](https://blog.sshh.io/p/building-multi-agent-systems-part) post, I called this the \u201cMaster-Clone\u201d architecture, and I strongly prefer it over the \u201cLead-Specialist\u201d model that custom subagents encourage.\n\n**The Takeaway:** Custom subagents are a brittle solution. Give your main agent the context (in `CLAUDE.md`) and let it use its own `Task/Explore(...)` feature to manage delegation.\n\n## [Resume, Continue, & History](https://docs.claude.com/en/docs/claude-code/common-workflows\\#resume-previous-conversations)\n\nOn a simple level, I use `claude --resume` and `claude --continue` frequently. They\u2019re great for restarting a bugged terminal or quickly rebooting an older session. I\u2019ll often `claude --resume` a session from days ago just to ask the agent to summarize how it overcame a specific error, which I then use to improve our `CLAUDE.md` and internal tooling.\n\nMore in the weeds, Claude Code stores all session history in `~/.claude/projects/` to tap into the raw historical session data. I have scripts that run meta-analysis on these logs, looking for common exceptions, permission requests, and error patterns to help improve agent-facing context.\n\n**The Takeaway:** Use `claude --resume` and `claude --continue`to restart sessions and uncover buried historical context.\n\n## [Hooks](https://docs.claude.com/en/docs/claude-code/hooks)\n\nHooks are huge. I don\u2019t use them for hobby projects, but they are critical for steering Claude in a complex enterprise repo. They are the deterministic \u201cmust-do\u201d rules that complement the \u201cshould-do\u201d suggestions in `CLAUDE.md`.\n\nWe use two types:\n\n1. **Block-at-Submit Hooks:** This is our primary strategy. We have a `PreToolUse` hook that wraps any `Bash(git commit)` command. It checks for a `/tmp/agent-pre-commit-pass` file, which our test script _only_ creates if all tests pass. If the file is missing, the hook blocks the commit, forcing Claude into a \u201ctest-and-fix\u201d loop until the build is green.\n\n2. **Hint Hooks:** These are simple, non-blocking hooks that provide \u201cfire-and-forget\u201d feedback if the agent is doing something suboptimal.\n\n\nWe intentionally do not use \u201cblock-at-write\u201d hooks (e.g., on `Edit` or `Write`). Blocking an agent mid-plan confuses or even \u201cfrustrates\u201d it. It\u2019s far more effective to let it finish its work and then check the final, completed result at the commit stage.\n\n**The Takeaway:** Use hooks to enforce state validation at commit time (`block-at-submit`). Avoid blocking at write time\u2014let the agent finish its plan, then check the final result.\n\n## [Planning Mode](https://youtu.be/QlWyrYuEC84?si=mQxc_iyVKmo3iJOd&t=915)\n\nPlanning is essential for any \u201clarge\u201d feature change with an AI IDE.\n\nFor my hobby projects, I exclusively use the built-in planning mode. It\u2019s a way to align with Claude before it starts, defining both _how_ to build something and the \u201cinspection checkpoints\u201d where it needs to stop and show me its work. Using this regularly builds a strong intuition for what minimal context is needed to get a good plan without Claude botching the implementation.\n\nIn our work monorepo, we\u2019ve started rolling out a custom planning tool built on the Claude Code SDK. Its similar to native plan mode but heavily prompted to align its outputs with our existing technical design format. It also enforces our internal best practices\u2014from code structure to data privacy and security\u2014out of the box. This lets our engineers \u201cvibe plan\u201d a new feature as if they were a senior architect (or at least that\u2019s the pitch).\n\n**The Takeaway:** Always use the built-in planning mode for complex changes to align on a plan before the agent starts working.\n\n## [Skills](https://docs.claude.com/en/docs/claude-code/skills)\n\nI agree with [Simon Willison\u2019s](https://simonwillison.net/2025/Oct/16/claude-skills/): **Skills are (maybe) a bigger deal than MCP.**\n\nIf you\u2019ve been following my posts, you\u2019ll know I\u2019ve drifted away from MCP for most dev workflows, preferring to build simple CLIs instead (as I argued in [\u201cAI Can\u2019t Read Your Docs\u201d](https://blog.sshh.io/p/ai-cant-read-your-docs)). My mental model for agent autonomy has evolved into three stages:\n\n1. **Single Prompt:** Giving the agent all context in one massive prompt. (Brittle, doesn\u2019t scale).\n\n2. **Tool Calling:** The \u201cclassic\u201d agent model. We hand-craft tools and abstract away reality for the agent. (Better, but creates new abstractions and context bottlenecks).\n\n3. **[Scripting](https://blog.sshh.io/i/167598476/scripting-agents):** We give the agent access to the raw environment\u2014binaries, scripts, and docs\u2014and it writes code _on the fly_ to interact with them.\n\n\nWith this model in mind, **Agent Skills** are the obvious next feature. They are the formal productization of the \u201cScripting\u201d layer.\n\nIf, like me, you\u2019ve already been [favoring CLIs over MCP,](https://blog.sshh.io/i/171208815/pattern-choose-the-right-interface-cli-vs-mcp) you\u2019ve been implicitly getting the benefit of Skills all along. The `SKILL.md` file is just a more organized, shareable, and discoverable way to document these CLIs and scripts and expose them to the agent.\n\n**The Takeaway:** Skills are the right abstraction. They formalize the \u201cscripting\u201d-based agent model, which is more robust and flexible than the rigid, API-like model that MCP represents.\n\n## [MCP (Model Context Protocol)](https://modelcontextprotocol.io/docs/getting-started/intro)\n\nSkills don\u2019t mean MCP is dead (see also [\u201cEverything Wrong with MCP\u201d](https://blog.sshh.io/p/everything-wrong-with-mcp)). Previously, many built awful, context-heavy MCPs with dozens of tools that just mirrored a REST API (`read_thing_a()`, `read_thing_b()`, `update_thing_c()`).\n\nThe \u201cScripting\u201d model (now formalized by Skills) is better, but it needs a secure way to access the environment. This to me is the new, more focused role for MCP.\n\nInstead of a bloated API, an MCP should be a simple, secure gateway that provides a few powerful, high-level tools:\n\n- `download_raw_data(filters\u2026)`\n\n- `take_sensitive_gated_action(args\u2026)`\n\n- `execute_code_in_environment_with_state(code\u2026)`\n\n\nIn this model, MCP\u2019s job isn\u2019t to abstract reality for the agent; its job is to manage the auth, networking, and security boundaries and then get out of the way. It provides the _entry point_ for the agent, which then uses its scripting and `markdown` context to do the actual work.\n\nThe only MCP I still use is for [Playwright](https://github.com/microsoft/playwright-mcp), which makes sense\u2014it\u2019s a complex, stateful environment. All my stateless tools (like Jira, AWS, GitHub) have been migrated to simple CLIs.\n\n**The Takeaway:** Use MCPs that act as data gateways. Give the agent one or two high-level tools (like a raw data dump API) that it can then script against.\n\n## [Claude Code SDK](https://docs.claude.com/en/api/agent-sdk/overview)\n\nClaude Code isn\u2019t just an interactive CLI; it\u2019s also a powerful SDK for building entirely new agents\u2014for both coding and non-coding tasks. I\u2019ve started using it as my default agent framework over tools like LangChain/CrewAI for most new hobby projects.\n\nI use it in three main ways:\n\n1. **Massive Parallel Scripting:** For large-scale refactors, bug fixes, or migrations, I don\u2019t use the interactive chat. I write simple bash scripts that call `claude -p \u201cin /pathA change all refs from foo to bar\u201d` in parallel. This is far more scalable and controllable than trying to get the main agent to manage dozens of subagent tasks.\n\n2. **Building Internal Chat Tools:** The SDK is perfect for wrapping complex processes in a simple chat interface for non-technical users. Like an installer that, on error, falls back to the Claude Code SDK to just _fix_ the problem for the user. Or an in-house \u201c [v0-at-home](http://v0.dev/)\u201d tool that lets our design team vibe-code mock frontends in our in-house UI framework, ensuring their ideas are high-fidelity and the code is more directly usable in frontend production code.\n\n3. **Rapid Agent Prototyping:** This is my most common use. It\u2019s not just for coding. If I have an idea for any agentic task (e.g., a \u201cthreat investigation agent\u201d that uses custom CLIs or MCPs), I use the Claude Code SDK to quickly build and test the prototype before committing to a full, deployed scaffolding.\n\n\n**The Takeaway:** The Claude Code SDK is a powerful, general-purpose agent framework. Use it for batch-processing code, building internal tools, and rapidly prototyping new agents _before_ you reach for more complex frameworks.\n\n## [Claude Code GHA](https://github.com/anthropics/claude-code-action)\n\nThe Claude Code GitHub Action (GHA) is probably one of my favorite and most slept on features. It\u2019s a simple concept: just run Claude Code in a GHA. But this simplicity is what makes it so powerful.\n\nIt\u2019s similar to [Cursor\u2019s background agents](https://cursor.com/docs/cloud-agent) or the Codex managed web UI but is far more customizable. You control the entire container and environment, giving you more access to data and, crucially, much stronger sandboxing and audit controls than any other product provides. Plus, it supports all the advanced features like Hooks and MCP.\n\nWe\u2019ve used it to build custom \u201cPR-from-anywhere\u201d tooling. Users can trigger a PR from Slack, Jira, or even a CloudWatch alert, and the GHA will fix the bug or add the feature and return a fully tested PR[1](https://blog.sshh.io/p/how-i-use-every-claude-code-feature#footnote-1-177742847).\n\nSince the GHA logs are the full agent logs, we have an ops process to regularly review these logs at a company level for common mistakes, bash errors, or unaligned engineering practices. This creates a data-driven flywheel: Bugs -> Improved CLAUDE.md / CLIs -> Better Agent.\n\n```\n$ query-claude-gha-logs --since 5d | claude -p \u201csee what the other claudes were getting stuck on and fix it, then put up a PR\u201c\n```\n\n**The Takeaway:** The GHA is the ultimate way to operationalize Claude Code. It turns it from a personal tool into a core, auditable, and self-improving part of your engineering system.\n\n## [settings.json](https://docs.claude.com/en/docs/claude-code/settings)\n\nFinally, I have a few specific `settings.json` configurations that I\u2019ve found essential for both hobby and professional work.\n\n- `HTTPS_PROXY`/`HTTP_PROXY`: This is great for debugging. I\u2019ll use it to inspect the raw traffic to see exactly what prompts Claude is sending. For background agents, it\u2019s also a powerful tool for fine-grained network sandboxing.\n\n- `MCP_TOOL_TIMEOUT`/`BASH_MAX_TIMEOUT_MS`: I bump these. I like running long, complex commands, and the default timeouts are often too conservative. I\u2019m honestly not sure if this is still needed now that bash background tasks are a thing, but I keep it just in case.\n\n- `ANTHROPIC_API_KEY`: At work, we use our enterprise API keys ( [via apiKeyHelper](https://www.reddit.com/r/ClaudeAI/comments/1jwvssa/comment/mtt0urz/?utm_source=share&utm_medium=web3x&utm_name=web3xcss&utm_term=1&utm_content=share_button)). It shifts us from a \u201cper-seat\u201d license to \u201cusage-based\u201d pricing, which is a much better model for how we work.\n\n - It accounts for the _massive_ variance in developer usage (We\u2019ve seen 1:100x differences between engineers).\n\n - It lets engineers to tinker with non-Claude-Code LLM scripts, all under our single enterprise account.\n- `\u201cpermissions\u201d`: I\u2019ll occasionally self-audit the list of commands I\u2019ve allowed Claude to auto-run.\n\n\n**The Takeaway:** Your `settings.json` is a powerful place for advanced customization.\n\n## Conclusion\n\nThat was a lot, but hopefully, you find it useful. If you\u2019re not already using a CLI-based agent like Claude Code or Codex CLI, you probably should be. There are rarely good guides for these advanced features, so the only way to learn is to dive in.\n\nThanks for reading Shrivu\u2019s Substack! Subscribe for free to receive new posts and support my work.\n\nSubscribe\n\n[1](https://blog.sshh.io/p/how-i-use-every-claude-code-feature#footnote-anchor-1-177742847)\n\nTo me, a fairly interesting philosophical question is how many reviewers should a PR get that was generated directly from a customer request (no internal human prompter)? We\u2019ve settled on 2 human approvals for any AI-initiated PR for now, but it is kind of a weird paradigm shift (for me at least) when it\u2019s no longer a human making something for another human to review.\n\n[](https://substack.com/profile/95159416-godzail)\n\n[](https://substack.com/profile/31961863-varun-godbole)\n\n[](https://substack.com/profile/11120659-jp-earnest)\n\n[](https://substack.com/profile/23366182-mark-ferree)\n\n[](https://substack.com/profile/348221825-josh-devon)\n\n238 Likes\u2219\n\n[16 Restacks](https://substack.com/note/p-177742847/restacks?utm_source=substack&utm_content=facepile-restacks)\n\n238\n\n21\n\n16\n\nShare\n\n#### Discussion about this post\n\nCommentsRestacks\n\n\n\n[](https://substack.com/profile/348221825-josh-devon?utm_source=comment)\n\n[Josh Devon](https://substack.com/profile/348221825-josh-devon?utm_source=substack-feed-item)\n\n[Nov 1](https://blog.sshh.io/p/how-i-use-every-claude-code-feature/comment/172623499 \"Nov 1, 2025, 8:17 PM\")\n\nLiked by Shrivu Shankar\n\nGreat guide, just be careful with Skills! Here\u2019s how we hijacked a skill with an invisible prompt inject: [https://open.substack.com/pub/securetrajectories/p/claude-skill-hijack-invisible-sentence](https://open.substack.com/pub/securetrajectories/p/claude-skill-hijack-invisible-sentence)\n\nExpand full comment\n\nLike (7)\n\nReply\n\nShare\n\n[](https://substack.com/profile/10115737-jacob-bumgarner?utm_source=comment)\n\n[Jacob Bumgarner](https://substack.com/profile/10115737-jacob-bumgarner?utm_source=substack-feed-item)\n\n[Nov 1](https://blog.sshh.io/p/how-i-use-every-claude-code-feature/comment/172656201 \"Nov 1, 2025, 11:01 PM\")\n\nLiked by Shrivu Shankar\n\nWonderful write up. thank you.\n\nCan you expand on this part a bit?\n\n\\> I write simple bash scripts that call claude -p \u201cin /pathA change all refs from foo to bar\u201d in parallel.\n\nHow do you prevent the agents from overwriting the code each is writing? Switching branches for each call?\n\nExpand full comment\n\nLike (1)\n\nReply\n\nShare\n\n[2 replies by Shrivu Shankar and others](https://blog.sshh.io/p/how-i-use-every-claude-code-feature/comment/172656201)\n\n[19 more comments...](https://blog.sshh.io/p/how-i-use-every-claude-code-feature/comments)\n\nTopLatestDiscussions\n\n[Everything Wrong with MCP](https://blog.sshh.io/p/everything-wrong-with-mcp)\n\n[Explaining the Model Context Protocol and everything that might go wrong.](https://blog.sshh.io/p/everything-wrong-with-mcp)\n\nApr 13\u2022\n[Shrivu Shankar](https://substack.com/@shrivu)\n\n115\n\n15\n\n16\n\n\n\n[How Cursor (AI IDE) Works](https://blog.sshh.io/p/how-cursor-ai-ide-works)\n\n[Turning LLMs into coding experts and how to take advantage of them.](https://blog.sshh.io/p/how-cursor-ai-ide-works)\n\nMar 15\u2022\n[Shrivu Shankar](https://substack.com/@shrivu)\n\n172\n\n26\n\n13\n\n\n\n[How to Backdoor Large Language Models](https://blog.sshh.io/p/how-to-backdoor-large-language-models)\n\n[Making \"BadSeek\", a sneaky open-source coding model.](https://blog.sshh.io/p/how-to-backdoor-large-language-models)\n\nFeb 8\u2022\n[Shrivu Shankar](https://substack.com/@shrivu)\n\n61\n\n12\n\n10\n\n\n\nSee all\n\n### Ready for more?\n\nSubscribe",

- "title": "How I Use Every Claude Code Feature - by Shrivu Shankar",

- "description": "A brain dump of all the ways I've been using Claude Code.",

- "fetched_at": "2025-12-12T13:07:53.014577Z",

- "source_name": "Nick Nisi",

- "source_url": "https://nicknisi.com",

- "relevant_keyword": "claude"

- },

- {

- "name": "13\\\\\n\\\\\nJUN\\\\\n\\\\\n2025",

- "url": "https://nicknisi.com/posts/ai-tooling",

- "type": "article",

- "status": "success",